SAS has been the backbone of enterprise analytics for decades. Banks, insurers, pharmaceutical companies, and government agencies built entire data ecosystems on SAS — thousands of programs, macro libraries, format catalogs, and scheduled jobs running nightly production pipelines. But the economics and technology landscape have shifted, and organizations are now actively planning migrations away from SAS toward modern, open-source alternatives.

Among the modern targets, dbt (data build tool) has emerged as the leading transformation framework for teams that want SQL-first pipelines with software engineering practices baked in. This guide walks through the complete journey from SAS to dbt — from the business case through construct mapping, code translation, testing strategies, and lineage preservation.

Why SAS Shops Are Moving to dbt

The migration from SAS is driven by four converging forces, each powerful on its own and overwhelming in combination.

Licensing costs. SAS licensing typically runs $50,000 to $500,000+ per year depending on the modules, number of users, and compute capacity. For large enterprises with multiple SAS environments across development, staging, and production, the annual spend can exceed $1 million. dbt Core is open-source and free. dbt Cloud pricing scales with usage but remains a fraction of SAS licensing for comparable workloads.

Declining talent pool. Universities no longer teach SAS as a primary language. Data science and analytics programs focus on Python, SQL, and R. New graduates enter the workforce fluent in SELECT statements and Pandas DataFrames, not DATA steps and PROC MEANS. Hiring and retaining SAS developers becomes harder and more expensive each year.

Cloud warehouse incompatibility. SAS was designed for on-premises execution against local datasets or direct database connections. Integrating SAS with cloud-native warehouses like Snowflake, BigQuery, or Databricks requires awkward bridging — SAS/ACCESS engines, ODBC connections, or data extraction-and-reload patterns that negate the benefits of elastic cloud compute. dbt runs natively inside the warehouse, leveraging its full compute power.

Lack of modern development practices. SAS programs are typically stored on shared file systems without version control. There is no built-in mechanism for pull request reviews, automated testing, or CI/CD deployment. Collaboration relies on tribal knowledge and informal conventions. dbt projects live in Git repositories where every change is tracked, reviewed, tested, and deployed through automated pipelines.

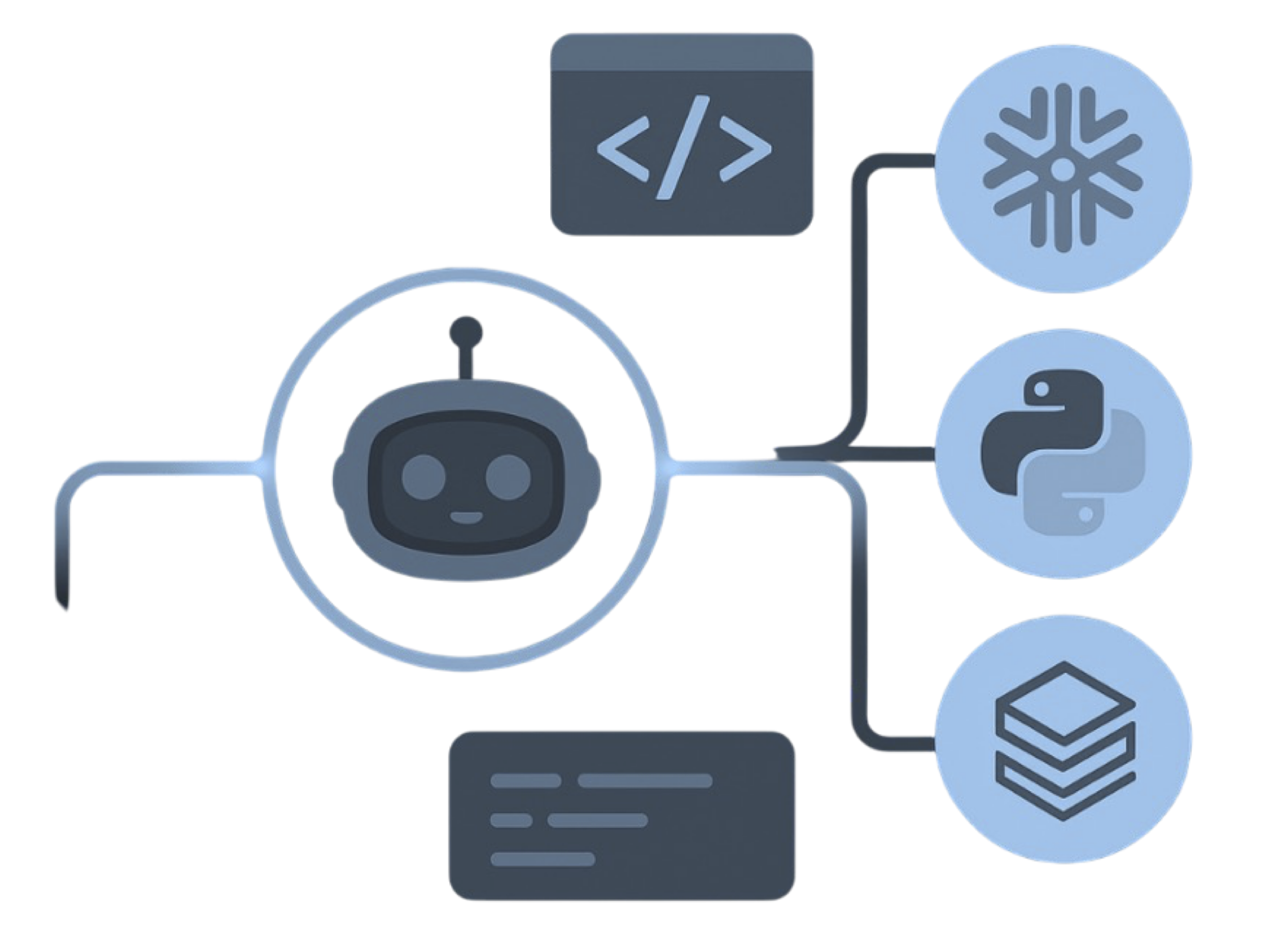

SAS to dbt migration — automated end-to-end by MigryX

Conceptual Mapping: SAS to dbt

Before writing a single line of converted code, teams need a clear mental model of how SAS constructs map to dbt equivalents. The following table provides that mapping.

| SAS Construct | dbt Equivalent | Notes |

|---|---|---|

SAS Macros (%macro/%mend) | Jinja macros | Parameterized SQL generation with conditional logic |

| PROC SQL | dbt models | SQL-first models using ref() and source() |

| DATA step | dbt models with CTEs | Row logic restructured into set-based SQL |

| LIBNAME statements | dbt sources | External table definitions with freshness monitoring |

This mapping is not always one-to-one. SAS DATA step logic — particularly programs that use iterative row processing, retain statements, arrays, and hash objects — requires restructuring into set-based SQL with CTEs. This is where automated conversion tools like MigryX provide the most value, handling the pattern recognition and restructuring that would take engineers hours per program.

MigryX: Idiomatic Code, Not Line-by-Line Translation

The difference between MigryX and manual migration is not just speed — it is code quality. MigryX generates idiomatic, platform-optimized code that leverages native features of your target platform. A SAS DATA step does not become a clunky row-by-row loop — it becomes a clean, vectorized DataFrame operation. A PROC SQL query does not become a literal translation — it becomes an optimized query that takes advantage of your platform’s pushdown capabilities.

Code Examples Side-by-Side

Seeing real code makes the mapping concrete. Consider a common SAS pattern: a parameterized macro that aggregates monthly revenue from a transactions table.

SAS Version

/* SAS: Monthly revenue macro */

%macro monthly_revenue(start_dt, end_dt);

proc sql;

create table work.revenue as

select customer_id,

sum(amount) as total_revenue

from sales.transactions

where txn_date between "&start_dt"d and "&end_dt"d

group by customer_id;

quit;

%mend;

/* Invocation */

%monthly_revenue(01JAN2024, 31JAN2024);What MigryX generates: MigryX translates this SAS macro into an idiomatic dbt model with proper Jinja parameterization, ref() calls, and materialization configuration.

For more complex patterns — such as SAS macros that conditionally join multiple tables based on parameter flags — MigryX produces equivalent Jinja macros with {% if %} blocks, preserving the conditional logic in a syntax that is more readable for modern data engineers.

MigryX precision parser — Deep AST-level analysis ensures every construct is understood before conversion begins

Platform-Specific Optimization by MigryX

MigryX maintains deep knowledge of every target platform’s strengths and best practices. When converting to Snowflake, it leverages Snowpark and native SQL functions. When targeting Databricks, it uses PySpark DataFrame operations optimized for distributed execution. When generating dbt models, it follows dbt best practices for modularity and testability. This platform awareness is what makes MigryX output production-ready from day one.

Testing Translation

SAS validation is typically implemented through a combination of PROC COMPARE (for dataset-level comparison), PROC FREQ (for distribution checks), and custom DATA step logic that writes exceptions to log files. These validation scripts are often informal, inconsistently applied, and disconnected from the production pipeline.

dbt inverts this model. Testing is a first-class feature of the framework, not an afterthought. Tests are defined alongside models and run as part of every pipeline execution.

MigryX generates a comprehensive dbt testing layer — schema tests, data tests, and freshness checks — derived from the original SAS validation logic.

Project Structure Best Practices

One of the most valuable aspects of migrating from SAS to dbt is the opportunity to impose structure on what is often an ad-hoc collection of programs. MigryX generates dbt projects following the staging/intermediate/mart pattern that the dbt community has established as best practice.

MigryX generates a structured dbt project with staging, intermediate, and mart layers following community best practices.

Intermediate Models

Intermediate models encapsulate business logic — joins between staging models, conditional transformations, window functions, and aggregations that serve multiple downstream consumers. This is where the bulk of SAS DATA step and PROC SQL logic lands after conversion.

Mart Models

Mart models are the analytics-ready output — wide, denormalized tables optimized for dashboard consumption, report generation, or ML feature stores. They reference intermediate models and are typically materialized as tables for performance.

This layered architecture is a significant improvement over the flat SAS program structure where a single .sas file might read raw data, apply business logic, aggregate results, and produce a report in one monolithic script. The dbt structure makes each transformation step independently testable, documentable, and reusable.

Lineage Comparison

Data lineage — understanding where data comes from, how it is transformed, and where it goes — is critical for regulatory compliance, debugging, and impact analysis. SAS and dbt approach lineage in fundamentally different ways.

SAS lineage is opaque. Lineage information is locked inside SAS metadata servers (if you use SAS Data Integration Studio) or must be traced manually by reading LIBNAME statements and following DATA step input/output datasets. In most SAS environments, lineage is tribal knowledge: the senior developer who wrote the code knows the dependencies, and when that person leaves, the knowledge leaves with them.

dbt lineage is automatic and complete. Every ref() call creates a dependency edge in the project's DAG (directed acyclic graph). Every source() call maps to an external table. The dbt docs generate command produces an interactive lineage graph that shows every model, test, source, and exposure and how they connect. This graph updates automatically when the code changes.

For organizations in regulated industries — banking, insurance, healthcare, pharmaceuticals — this lineage transparency is not a convenience feature. It is a compliance requirement. Regulators expect organizations to trace any reported number back to its source data. dbt's automatic lineage makes this trivial; SAS's opaque lineage makes it an expensive manual exercise.

Lineage Preservation with MigryX

MigryX preserves column-level lineage through the conversion process. Every SAS variable traced from source to output is mapped to its dbt model equivalent, and the lineage metadata is exported as dbt docs descriptions and exposure definitions. The result is a fully navigable lineage graph on day one of the dbt migration.

The journey from SAS to dbt is not just a technology migration. It is a shift from proprietary, opaque, manually managed data pipelines to open-source, transparent, automatically tested and documented ones. The conceptual mappings are well understood, the tooling is mature, and the community support is extensive. For organizations still running SAS, the question is no longer whether to migrate, but how quickly and safely the migration can be executed.

Why MigryX Delivers Superior Migration Results

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Production-ready output: MigryX generates code that passes code review and runs in production — not prototype-quality output that needs weeks of cleanup.

- Platform optimization: Converted code leverages target platform-specific features for maximum performance and cost efficiency.

- 25+ source technologies: Whether migrating from SAS, Informatica, DataStage, SSIS, or any of 25+ legacy technologies, MigryX handles it.

- Automated documentation: Every conversion decision is documented with before/after code mappings and transformation rationale.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to migrate SAS to dbt?

See how MigryX automates SAS-to-dbt conversion with precision, speed, and trust.

Schedule a Demo