The modern data lake promised cheap, scalable storage for every type of data. It delivered on the storage part. But reliability, consistency, and performance? Those remained firmly in the domain of expensive data warehouses. Apache Iceberg changes that equation entirely, bringing warehouse-grade reliability to lake economics without requiring you to abandon open standards or lock yourself into a single vendor.

For organizations running legacy ETL platforms like SAS, Informatica, DataStage, or Talend, Iceberg represents more than a storage format. It represents the destination: an open, high-performance table layer that makes your data accessible to every modern engine in your stack.

What Is Apache Iceberg?

Apache Iceberg is an open table format designed for huge analytic datasets. Originally created at Netflix to solve the limitations of Apache Hive tables at petabyte scale, Iceberg was donated to the Apache Software Foundation and graduated as a top-level project in 2024. It has since become one of the most consequential open-source projects in the data engineering ecosystem.

At its core, Iceberg adds critical capabilities that traditional data lake storage lacks: ACID transactions, schema evolution, hidden partitioning, and time travel. It works on top of standard object storage (Amazon S3, Azure Data Lake Storage, Google Cloud Storage) and standard file formats (Parquet, ORC, Avro). Think of it as a metadata layer that transforms a pile of files into a proper table with database-like guarantees.

Unlike proprietary formats that tie your data to a specific compute engine, Iceberg tables are defined by an open specification. Any engine that implements the specification can read and write Iceberg tables. This separation of storage from compute is what makes Iceberg transformative: your data is truly yours, stored in open formats, described by open metadata, and accessible from any compatible engine.

The practical impact is immediate. Teams no longer need to choose between the reliability of a data warehouse and the flexibility of a data lake. Iceberg delivers both. Concurrent readers and writers operate safely on the same table. Schema changes happen without rewriting data. Partition strategies evolve as query patterns change. And every change is tracked in an immutable metadata log that enables point-in-time queries across the entire history of the table.

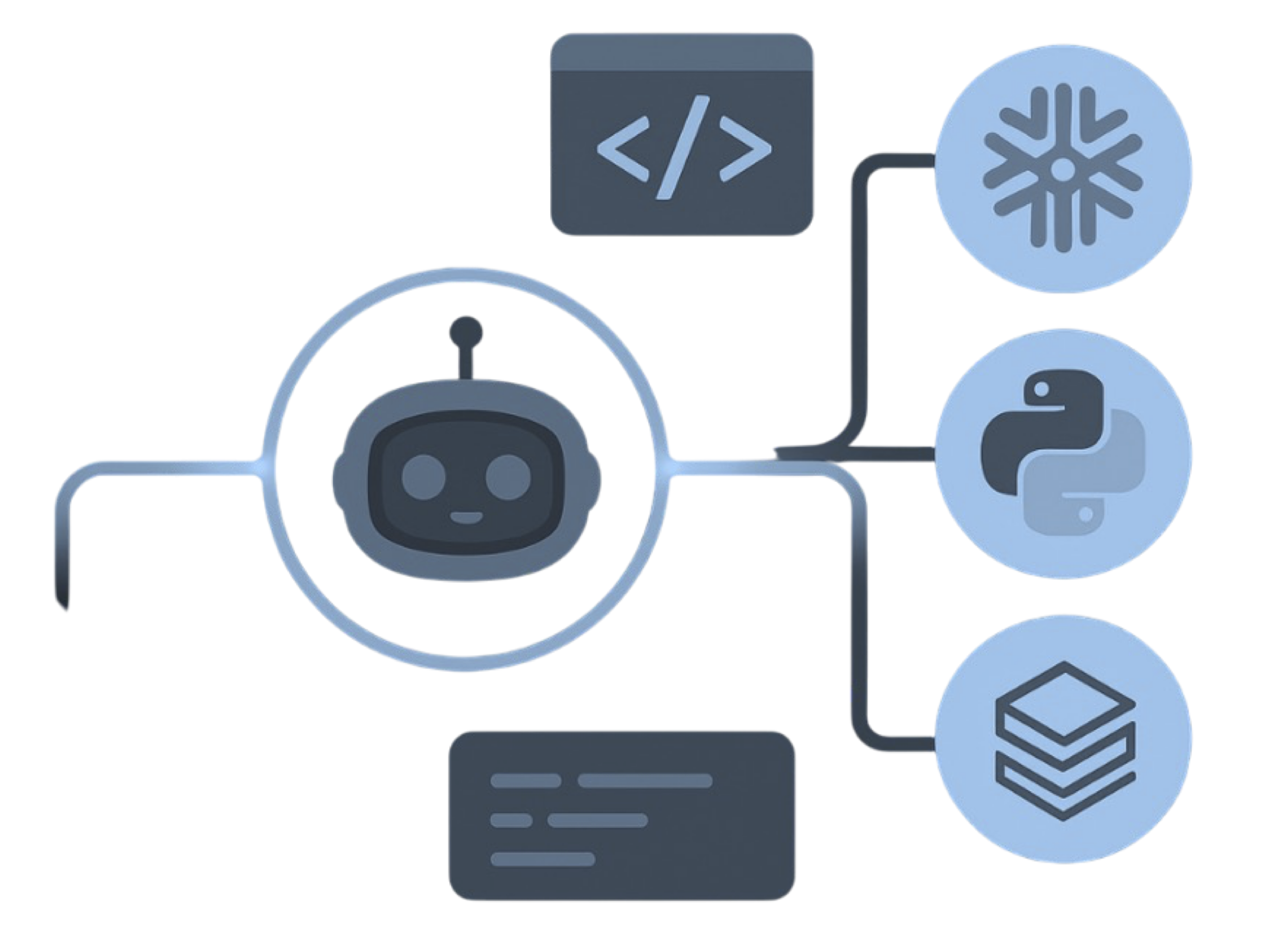

Apache Iceberg — enterprise migration powered by MigryX

Core Capabilities

Iceberg's feature set addresses the specific pain points that have plagued data lake implementations for years. Each capability solves a real problem that data engineers encounter daily.

Hidden Partitioning

Traditional Hive-style partitioning requires users to know the exact partition layout and include partition columns in their queries. If the table is partitioned by year/month/day, users must filter on those columns or risk full table scans. Iceberg introduces hidden partitioning: the table is partitioned by a transform on a source column (e.g., days(event_date)), but users simply query event_date as they normally would. The engine automatically prunes partitions without any user awareness of the underlying layout. This eliminates an entire class of performance bugs and makes partition evolution possible without user-facing changes.

Schema Evolution

Adding, renaming, dropping, or reordering columns in Iceberg is a metadata-only operation. No data files are rewritten. Iceberg tracks columns by unique IDs rather than by name or position, which means existing data files remain valid even after schema changes. This is a stark contrast to Hive tables, where schema changes often require full table rebuilds or result in corrupted reads.

Time Travel

Every write to an Iceberg table creates an immutable snapshot. Users can query any historical snapshot by timestamp or snapshot ID. This enables reproducible analytics, audit trails, and easy rollback from bad writes. In regulated industries, time travel satisfies data lineage and auditability requirements that previously required complex custom solutions.

ACID Transactions

Iceberg provides serializable isolation for writes, meaning concurrent writers resolve conflicts through optimistic concurrency control. Readers always see a consistent snapshot of the table, even while writes are in progress. This eliminates the "dirty read" and "partial write" problems that are endemic to directory-based table formats.

Metadata-Driven Pruning

Iceberg maintains column-level statistics (min, max, null count, distinct count) for every data file in the table. When a query includes predicates, the engine uses these statistics to skip entire data files without opening them. For large tables with thousands of data files, this metadata-driven pruning can reduce query times by orders of magnitude compared to formats that require listing and opening files to determine relevance.

MigryX: Idiomatic Code, Not Line-by-Line Translation

The difference between MigryX and manual migration is not just speed — it is code quality. MigryX generates idiomatic, platform-optimized code that leverages native features of your target platform. A SAS DATA step does not become a clunky row-by-row loop — it becomes a clean, vectorized DataFrame operation. A PROC SQL query does not become a literal translation — it becomes an optimized query that takes advantage of your platform’s pushdown capabilities.

Iceberg vs. Hive Tables

The comparison between Iceberg and Hive tables illustrates why the industry is migrating away from the original data lake table format.

Hive tables are fundamentally directory-based. A table is a directory, partitions are subdirectories, and the metastore tracks only directory paths. This design has several consequences: there is no atomic write operation (a failed write can leave partial data visible), schema changes are fragile (renaming a column breaks all existing data files), partition management is manual and error-prone, and query planning requires listing directories, which becomes catastrophically slow at scale.

Iceberg takes the opposite approach. Every data file is tracked individually in the metadata layer. Writes are atomic: either all files from a commit are visible, or none are. Schema changes are tracked by column IDs, not names. Partition specs are stored in metadata and can evolve without rewriting data. And query planning uses file-level statistics, eliminating directory listing entirely.

The performance difference on large tables is dramatic. Queries that take minutes on Hive tables due to directory listing and full partition scans often complete in seconds on Iceberg tables thanks to metadata-driven file pruning. For tables with thousands of partitions and millions of files, the improvement can be 10-100x for common query patterns.

MigryX precision parser — Deep AST-level analysis ensures every construct is understood before conversion begins

Platform-Specific Optimization by MigryX

MigryX maintains deep knowledge of every target platform’s strengths and best practices. When converting to Snowflake, it leverages Snowpark and native SQL functions. When targeting Databricks, it uses PySpark DataFrame operations optimized for distributed execution. When generating dbt models, it follows dbt best practices for modularity and testability. This platform awareness is what makes MigryX output production-ready from day one.

Multi-Engine Support

If there is a single killer feature that explains Iceberg's rapid adoption, it is multi-engine support. An Iceberg table can be written by one engine and read by a completely different one. This is not a theoretical capability; it is the primary architectural pattern in production deployments.

The list of engines with production-grade Iceberg support is extensive and growing: Apache Spark, Trino (formerly PrestoSQL), Apache Flink, Dremio, Snowflake, Databricks, Amazon Athena, Google BigQuery, StarRocks, and Doris, among others. Write data with a Spark batch job, query it with Trino for interactive analytics, stream updates with Flink, and surface results in Snowflake for business users. All engines operate on the same physical table, the same files, the same metadata. No data copies. No synchronization jobs. No vendor lock-in.

This multi-engine capability fundamentally changes how organizations architect their data platforms. Instead of choosing a single vendor and building everything on their stack, teams can select the best engine for each workload. Batch processing on Spark, interactive queries on Trino, streaming on Flink, and BI on Snowflake. Iceberg is the common layer that makes this polyglot architecture practical.

Industry Adoption

Iceberg's adoption has accelerated from early adopters to mainstream enterprise deployment in a remarkably short period. Netflix, where Iceberg was created, manages petabytes of data in Iceberg tables. Apple operates one of the largest Iceberg deployments in the world, with exabytes of data under management. LinkedIn, Airbnb, Stripe, Expedia, and hundreds of other technology companies have standardized on Iceberg for their data lake storage.

The enterprise adoption wave is being driven by several converging factors. First, cloud data platform vendors have embraced Iceberg: Snowflake natively reads and writes Iceberg tables, Databricks supports Iceberg through Delta UniForm, and AWS has integrated Iceberg into Athena, EMR, and Glue. Second, the Iceberg REST catalog specification has enabled a new class of catalog services. AWS Glue, Tabular (now part of Databricks), Polaris, Nessie, and Unity Catalog all support the REST catalog API, meaning organizations can manage Iceberg metadata through standardized interfaces without proprietary lock-in.

Third, and perhaps most importantly, the cost argument is compelling. Organizations migrating from proprietary data warehouses to Iceberg on object storage routinely report 60-80% reductions in storage and compute costs while gaining capabilities (time travel, multi-engine access, schema evolution) that their previous platform could not provide.

How MigryX Generates Iceberg-Native Output

For organizations migrating from legacy ETL platforms, Iceberg is the ideal target format. But generating correct, performant Iceberg-native code from SAS, Informatica, DataStage, or Talend source code requires deep understanding of both the source platform semantics and the Iceberg table format.

MigryX converts legacy ETL code into PySpark that writes to Iceberg tables natively. The conversion engine does not simply translate syntax; it generates code that takes full advantage of Iceberg's capabilities. Output tables are created with appropriate Iceberg table properties, partition specifications are configured based on data characteristics, and write operations use Iceberg's native API for atomic commits.

Partition strategies are a critical aspect of Iceberg performance, and choosing the wrong strategy can negate much of Iceberg's benefit. MigryX's Merlin AI analyzes source data profiles, existing query patterns, and data distribution to recommend optimal partition specifications. For a time-series table, it might recommend days(event_date). For a high-cardinality dimension table, it might recommend bucket(16, customer_id). These recommendations are included in the generated code as configured table properties.

Schema evolution is handled automatically during the conversion process. SAS data types (8-byte floating point, fixed-width character, SAS dates as days since January 1, 1960) are mapped to appropriate Iceberg types (decimal, string, timestamp) with explicit precision preservation. When source schemas change between program versions, MigryX generates ALTER TABLE statements that leverage Iceberg's metadata-only schema evolution.

Column-level lineage from the conversion is registered in the Iceberg catalog. This means that downstream consumers can trace any column in an Iceberg table back through the transformation logic to its original source in the legacy platform. This lineage metadata is critical for regulatory compliance, impact analysis, and data governance.

MigryX + Iceberg

MigryX generates PySpark code that writes to Iceberg tables natively — with AI-recommended partition strategies, schema evolution handling, and lineage registered in your Iceberg catalog.

The combination of Iceberg's open format with MigryX's automated conversion creates a migration path that is both technically sound and operationally efficient. Legacy ETL code becomes Iceberg-native PySpark. Legacy data becomes Iceberg tables on object storage. Legacy metadata becomes Iceberg catalog entries with full lineage. And the resulting platform is open, multi-engine, and vendor-neutral from day one.

Why MigryX Delivers Superior Migration Results

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Production-ready output: MigryX generates code that passes code review and runs in production — not prototype-quality output that needs weeks of cleanup.

- Platform optimization: Converted code leverages target platform-specific features for maximum performance and cost efficiency.

- 25+ source technologies: Whether migrating from SAS, Informatica, DataStage, SSIS, or any of 25+ legacy technologies, MigryX handles it.

- Automated documentation: Every conversion decision is documented with before/after code mappings and transformation rationale.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to migrate to Apache Iceberg?

See how MigryX automates the conversion of legacy ETL to Iceberg-native PySpark with precision and speed.

Schedule a Demo